Search engines, AI platforms, and social networks all rely on automated bots to discover and index your website’s content. According to Imperva’s 2024 Bad Bot Report, bots now account for nearly 50% of all internet traffic, with both helpful crawlers and malicious scrapers visiting your pages daily.

Understanding which web crawlers are accessing your site, and what they do with your content, is essential for SEO, security, and performance. In 2026, the web crawlers list has expanded significantly beyond traditional search engine bots to include AI training crawlers from OpenAI, Anthropic, and Google.

This comprehensive crawler list covers the 15 most common bots and spiders you will encounter, organized by category, along with their user-agent strings, what they do, and how to control their access. Bookmark this web crawler list as a quick reference for identifying bot traffic in your server logs.

What Are Web Crawlers?

A web crawler (also called a web spider, bot, or robot) is an automated program that systematically browses the internet to discover, read, and index web pages. Crawlers follow links from one page to another, building a map of the web that search engines and other services use to organize information.

Googlebot is the most well-known web crawler, responsible for indexing billions of pages for Google Search. But dozens of other crawlers operate across the web, each serving a different purpose.

How Do Web Crawlers Work?

A web crawler starts with a seed list of URLs. It visits each URL, reads the page content and HTML structure, extracts all the links it finds, and adds those new URLs to its queue. The crawler then repeats this process continuously.

As it works, the crawler stores the page data in an index, a structured database that allows the parent service (such as Google Search) to quickly retrieve relevant results. Crawlers follow rules set in your site’s robots.txt file and respect meta directives like noindex and nofollow to determine which pages to skip.

Most crawlers also implement a crawl rate limit to avoid overloading your server with too many requests at once.

Types of Web Crawlers

Understanding the different types of web crawlers helps you decide which ones to allow or block on your site.

General-purpose crawlers index the entire web for search engines. Googlebot, Bingbot, and Yandex Bot fall into this category. They aim to discover and catalog as much content as possible.

AI training crawlers visit websites to collect data used for training large language models (LLMs). GPTBot, ClaudeBot, and Google-Extended are examples. These are newer and more controversial because they use content for AI model training rather than search indexing.

Social media crawlers fetch page metadata (title, description, image) when someone shares a link on platforms like Facebook, Twitter, or LinkedIn. They generate the link preview cards you see in social feeds.

SEO and analytics crawlers scan websites to collect data for marketing and SEO tools. SEMrushBot and AhrefsBot gather backlink data, keyword rankings, and site health metrics that digital marketers rely on.

Focused crawlers target specific types of content, such as academic papers, news articles, or e-commerce product data, rather than indexing the entire web.

Looking for ways to protect your website from hackers? Check out the 5 Best WordPress Security Plugins to Protect Your Site

15 Most Common Web Crawlers in 2026

Here is the complete bot crawler list for 2026, organized by category. For each crawler, you will find its purpose, user-agent string, and key details.

Search Engine Crawlers

1. Googlebot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 1 Googlebot - Google web crawler bot](https://nexterwp.com/wp-content/uploads/2024/09/Google-Bot.png)

Googlebot is Google’s primary web crawler and the most active bot on the internet. It discovers and indexes web pages for Google Search, Google Images, Google News, and other Google services.

- User-Agent:

Googlebot/2.1andGooglebot-Image/1.0 - Owner: Google

- Purpose: Indexing pages for Google Search

- Crawl frequency: Continuously, with a crawl budget assigned per site based on site authority and server capacity

- Key detail: Googlebot renders JavaScript, meaning it processes dynamically loaded content the same way a browser does. Google Search Console lets you monitor Googlebot’s crawl activity and fix indexing issues.

2. Bingbot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 2 Bingbot - Microsoft Bing web crawler](https://nexterwp.com/wp-content/uploads/2024/09/Bing-Bot.png)

Bingbot is Microsoft’s web crawler that indexes pages for Bing Search, Yahoo Search (which is powered by Bing), and Microsoft’s AI-powered Copilot search.

- User-Agent:

bingbot/2.0 - Owner: Microsoft

- Purpose: Indexing pages for Bing and Yahoo Search

- Crawl frequency: Continuous, with configurable crawl rate via Bing Webmaster Tools

- Key detail: Bingbot prioritizes mobile-friendly pages and supports IndexNow, a protocol that lets you notify Bing instantly when content changes instead of waiting for the next crawl.

3. Yandex Bot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 3 Yandex Bot - Yandex search engine crawler](https://nexterwp.com/wp-content/uploads/2024/09/Yandex-Bot.png)

Yandex Bot is the web crawler for Yandex, the largest search engine in Russia and the fourth-largest search engine globally by market share.

- User-Agent:

YandexBot/3.0 - Owner: Yandex

- Purpose: Indexing pages for Yandex Search

- Crawl frequency: Continuous

- Key detail: Yandex Bot supports multiple languages and prioritizes geographically relevant content. If your site targets Russian, Turkish, or CIS-region audiences, optimizing for Yandex Bot is important. Yandex provides its own Webmaster Tools for monitoring crawl activity.

4. DuckDuckBot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 4 DuckDuckBot - DuckDuckGo privacy search crawler](https://nexterwp.com/wp-content/uploads/2024/09/Duckduck-Bot.png)

DuckDuckBot is the web crawler used by DuckDuckGo, the privacy-focused search engine that does not track users or personalize search results.

- User-Agent:

DuckDuckBot/1.1 - Owner: DuckDuckGo

- Purpose: Indexing pages for DuckDuckGo Search

- Crawl frequency: Less frequent than Googlebot or Bingbot

- Key detail: DuckDuckGo also sources results from over 400 other sources including Bing, Wikipedia, and its own crawler. DuckDuckBot respects robots.txt and is privacy-focused by design.

5. Applebot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 5 Applebot - Apple Siri and Spotlight crawler](https://nexterwp.com/wp-content/uploads/2024/09/Apple-Bot.png)

Applebot is Apple’s web crawler, introduced in 2015. It powers search results in Siri, Spotlight Suggestions, and Safari’s search features.

- User-Agent:

Applebot/0.1 - Owner: Apple

- Purpose: Powering Siri, Spotlight, and Safari search suggestions

- Crawl frequency: Moderate

- Key detail: Applebot renders JavaScript and CSS, so it sees your pages as users do. If no specific Applebot rules exist in robots.txt, it follows Googlebot’s directives as a fallback. Ranking factors include user engagement, content relevance, link quality, and web design characteristics.

6. Baidu Spider

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 6 Baidu Spider - Baidu search engine crawler](https://nexterwp.com/wp-content/uploads/2024/09/Baidu-Spider.png)

Baidu Spider is the web crawler for Baidu, the dominant search engine in China with over 70% market share in mainland China.

- User-Agent:

Baiduspider/2.0 - Owner: Baidu

- Purpose: Indexing pages for Baidu Search

- Crawl frequency: Continuous, configurable via Baidu Webmaster Tools (Baidu Ziyuan)

- Key detail: If you target Chinese-speaking audiences, Baidu Spider is essential. It handles Chinese character sets natively and offers image-specific (

Baiduspider-image) and video-specific (Baiduspider-video) variants. Baidu Webmaster Tools lets you submit sitemaps and monitor crawl issues.

AI Training Crawlers

7. GPTBot (OpenAI)

GPTBot is OpenAI’s web crawler that collects publicly available web content to train and improve AI models including ChatGPT.

- User-Agent:

GPTBot/1.2 - Owner: OpenAI

- Purpose: Collecting training data for AI models

- Crawl frequency: Periodic

- Key detail: GPTBot is distinct from

ChatGPT-User, which browses the web in real time when a ChatGPT user asks a question. You can block GPTBot in robots.txt to prevent your content from being used for AI training while still allowing ChatGPT to browse your site.

8. ClaudeBot (Anthropic)

ClaudeBot is Anthropic’s web crawler that gathers web content to train Claude, Anthropic’s AI assistant.

- User-Agent:

ClaudeBot/1.0 - Owner: Anthropic

- Purpose: Collecting training data for Claude AI models

- Crawl frequency: Periodic

- Key detail: Anthropic provides clear documentation on how to block ClaudeBot via robots.txt. Like GPTBot, ClaudeBot only collects publicly accessible pages and respects standard crawl directives.

9. Google-Extended

Google-Extended is Google’s AI-specific crawler, separate from Googlebot. It collects content used to improve Google’s AI products including Gemini and AI Overviews in Search.

- User-Agent:

Google-Extended - Owner: Google

- Purpose: Collecting training data for Google AI (Gemini, AI Overviews)

- Crawl frequency: Periodic

- Key detail: Blocking Google-Extended in robots.txt prevents your content from being used for Gemini training but does not affect your Google Search rankings or Googlebot’s indexing. This gives site owners granular control over how Google uses their content.

Social Media Crawlers

10. Facebook Crawler

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 7 Facebook Crawler - Meta social media bot](https://nexterwp.com/wp-content/uploads/2024/09/Facebook-External-Hit.png)

Facebook Crawler (also known as Facebot) accesses your site when someone shares a link on Facebook, Instagram, WhatsApp, or Messenger. It fetches Open Graph metadata to build the link preview card.

- User-Agent:

facebookexternalhit/1.1andfacebookcatalog/1.0 - Owner: Meta

- Purpose: Generating link preview cards on Meta platforms

- Crawl frequency: On-demand (triggered when a link is shared)

- Key detail: Facebook Crawler reads Open Graph tags (

og:title,og:description,og:image) from your page’s HTML. If these tags are missing, the preview card will be incomplete. Use Meta’s Sharing Debugger tool to test and refresh your link previews.

11. Twitterbot

Twitterbot (now X Bot) fetches page metadata when a link is shared on X (formerly Twitter) to generate Twitter Card previews.

- User-Agent:

Twitterbot/1.0 - Owner: X Corp (formerly Twitter)

- Purpose: Generating Twitter Card previews

- Crawl frequency: On-demand (triggered when a link is shared)

- Key detail: Twitterbot reads Twitter Card meta tags (

twitter:card,twitter:title,twitter:description,twitter:image) from your page. If Twitter-specific tags are missing, it falls back to Open Graph tags. Use X’s Card Validator to preview how your links will appear.

12. LinkedInBot

LinkedInBot fetches page metadata when a link is shared on LinkedIn to generate the link preview that appears in posts and messages.

- User-Agent:

LinkedInBot/1.0 - Owner: LinkedIn (Microsoft)

- Purpose: Generating link previews on LinkedIn

- Crawl frequency: On-demand (triggered when a link is shared)

- Key detail: LinkedInBot reads Open Graph tags, similar to Facebook Crawler. Use LinkedIn’s Post Inspector tool to test and refresh your link previews. For B2B websites, optimizing for LinkedInBot is especially important since LinkedIn is a primary traffic source.

SEO and Analytics Crawlers

13. SEMrushBot

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 8 SEMrushBot - Semrush SEO tool crawler](https://nexterwp.com/wp-content/uploads/2024/09/SEMrushBot.png)

SEMrushBot is the web crawler used by Semrush, one of the largest SEO and digital marketing platforms. It collects data on backlinks, keyword rankings, and website performance.

- User-Agent:

SemrushBot/7 - Owner: Semrush

- Purpose: Collecting SEO and marketing data

- Crawl frequency: Continuous

- Key detail: SEMrushBot does not affect your search engine rankings. It collects data that Semrush users access for competitor research, backlink analysis, and site audits. You can safely block it if you do not want your site’s data appearing in Semrush’s database.

14. AhrefsBot

AhrefsBot is the web crawler for Ahrefs, a popular SEO toolset known for its backlink database. It operates one of the most active crawlers on the web.

- User-Agent:

AhrefsBot/7.0 - Owner: Ahrefs

- Purpose: Building Ahrefs’ backlink index and SEO database

- Crawl frequency: Very frequent (Ahrefs crawls approximately 8 billion pages daily)

- Key detail: AhrefsBot is one of the most aggressive crawlers on the web. According to Ahrefs, it processes around 8 billion pages every 24 hours. If it causes performance issues on smaller servers, you can reduce its crawl rate or block it via robots.txt.

15. Slurp Bot (Yahoo)

![Web Crawlers List: 15 Most Common Bots & Spiders [2026] 9 Slurp Bot - Yahoo web crawler](https://nexterwp.com/wp-content/uploads/2024/09/Slurp-Bot.png)

Slurp Bot was Yahoo’s web crawler. While Yahoo Search is now powered by Bing’s index, Slurp Bot historically played a major role in web indexing and still appears in some server logs.

- User-Agent:

Slurp - Owner: Yahoo (now Oath/Verizon Media)

- Purpose: Historically indexed pages for Yahoo Search

- Crawl frequency: Rare (Yahoo Search now uses Bingbot)

- Key detail: Slurp Bot is largely retired since Yahoo transitioned to Bing’s search index. You may still see it in legacy server logs, but it no longer significantly impacts your search visibility.

How to Control Web Crawler Access on WordPress

WordPress gives you several ways to manage which crawlers can access your site and which pages they can index.

robots.txt: Your site’s robots.txt file (located at yourdomain.com/robots.txt) is the primary way to control crawler access. You can block specific user-agents, disallow specific directories, or set a crawl delay.

# Block AI training crawlers

User-agent: GPTBot

Disallow: /

User-agent: ClaudeBot

Disallow: /

User-agent: Google-Extended

Disallow: /

# Allow search engine crawlers

User-agent: Googlebot

Allow: /

User-agent: Bingbot

Allow: /Meta robots tags: Add <meta name="robots" content="noindex, nofollow"> to specific pages you want to exclude from indexing entirely.

WordPress SEO plugins: Plugins like Rank Math and Yoast SEO let you set noindex/nofollow rules per page, per post type, or per taxonomy from the WordPress dashboard without editing code.

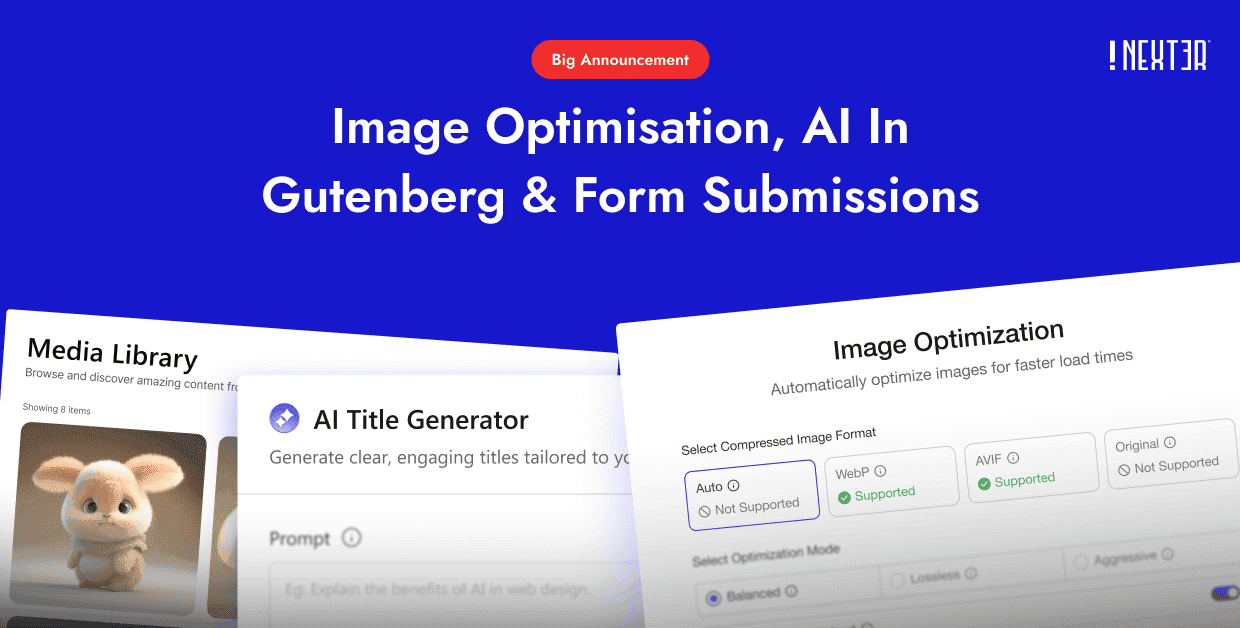

Nexter Extension includes built-in security features that help protect your WordPress site from malicious bots and unwanted crawler traffic. With features like XML-RPC disabling, login protection, and IP-based access controls, you can manage bot access alongside your 50+ other site management tools from one unified dashboard.

Further Read: How about highly securing your website content from malicious attacks and cyber threats? Here’s the blog about the 5 Best WordPress Content Protection Plugins.

Complete Web Crawlers List: Summary Table

| Crawler | Owner | Category | User-Agent | Still Active? |

|---|---|---|---|---|

| Googlebot | Search Engine | Googlebot/2.1 | Yes | |

| Bingbot | Microsoft | Search Engine | bingbot/2.0 | Yes |

| Yandex Bot | Yandex | Search Engine | YandexBot/3.0 | Yes |

| DuckDuckBot | DuckDuckGo | Search Engine | DuckDuckBot/1.1 | Yes |

| Applebot | Apple | Search Engine | Applebot/0.1 | Yes |

| Baidu Spider | Baidu | Search Engine | Baiduspider/2.0 | Yes |

| GPTBot | OpenAI | AI Training | GPTBot/1.2 | Yes |

| ClaudeBot | Anthropic | AI Training | ClaudeBot/1.0 | Yes |

| Google-Extended | AI Training | Google-Extended | Yes | |

| Facebook Crawler | Meta | Social Media | facebookexternalhit/1.1 | Yes |

| Twitterbot | X Corp | Social Media | Twitterbot/1.0 | Yes |

| LinkedInBot | Social Media | LinkedInBot/1.0 | Yes | |

| SEMrushBot | Semrush | SEO Tool | SemrushBot/7 | Yes |

| AhrefsBot | Ahrefs | SEO Tool | AhrefsBot/7.0 | Yes |

| Slurp Bot | Yahoo | Search Engine | Slurp | Mostly retired |

Stay updated with Helpful WordPress Tips, Insider Insights, and Exclusive Updates – Subscribe now to keep up with Everything Happening on WordPress!

Wrapping Up

Web crawlers are the backbone of how search engines, AI platforms, and social networks discover and organize content across the internet. This list of crawlers represents the 15 bots and spiders you are most likely to encounter in your server logs in 2026.

For WordPress site owners, the key takeaway is to welcome crawlers that help your SEO (Googlebot, Bingbot) while controlling access for AI training bots (GPTBot, ClaudeBot, Google-Extended) based on your preferences. Use robots.txt and SEO plugins to set clear rules for each crawler type.

If you want a WordPress theme that outputs clean, semantic HTML that crawlers can index efficiently, Nexter Theme loads in under 0.5 seconds with zero jQuery and smart asset management (1 CSS + 1 JS per page). Combined with 90+ Gutenberg blocks and 50+ site extensions, Nexter gives you a crawler-friendly WordPress stack without plugin bloat. See Nexter pricing to get started.

Get Exclusive WordPress Tips, Tricks and Resources Delivered Straight to Your Inbox!

Subscribe to stay updated with everything happening on WordPress.

FAQs on Web Crawlers

What are the most common web crawlers?

The best-known web crawlers are Googlebot (Google Search), Bingbot (Bing Search), GPTBot (OpenAI), Facebook Crawler (Meta), AhrefsBot (Ahrefs), and SEMrushBot (Semrush). Googlebot is the most active bot web crawler, indexing billions of pages for Google Search. AI training crawlers like GPTBot and ClaudeBot have become increasingly common since 2023. See the full crawlers list above for all 15 bots.

Is ChatGPT a web crawler?

ChatGPT itself is not a web crawler. However, OpenAI operates two related bots. GPTBot crawls websites to collect training data for AI models. ChatGPT-User browses the web in real time when a ChatGPT user asks a question that requires current information. You can block GPTBot via robots.txt while still allowing ChatGPT-User.

How do I block AI crawlers from my WordPress site?

Add disallow rules to your robots.txt file for each AI crawler you want to block. The most common AI crawler user-agents are GPTBot (OpenAI), ClaudeBot (Anthropic), Google-Extended (Google Gemini), and CCBot (Common Crawl). WordPress SEO plugins like Rank Math also let you manage robots.txt rules from the dashboard.

How do I check which crawlers are visiting my site?

Check your server access logs for bot user-agent strings. Most WordPress hosting dashboards (cPanel, Plesk, RunCloud) provide log viewers. You can also use tools like Google Search Console (for Googlebot activity), Bing Webmaster Tools (for Bingbot), or third-party services like Cloudflare Analytics to monitor bot traffic.

Do web crawlers affect website performance?

Yes, aggressive crawling can increase server load and slow down your site for real visitors. High-frequency crawlers like AhrefsBot (8 billion pages/day) can strain smaller servers. Use crawl rate settings in your robots.txt or webmaster tools to limit how fast bots can crawl your site. Nexter Extension’s 50+ site management tools include performance optimization features that help keep your site fast even under heavy bot traffic.

What is the difference between a web crawler and a web scraper?

A web crawler systematically discovers and indexes pages by following links across the web. A web scraper extracts specific data from web pages for a targeted purpose, such as collecting product prices or contact information. Crawlers are typically operated by search engines and follow robots.txt rules. Scrapers are often custom-built and may not respect access controls.

Does WebCrawler still exist?

Yes, WebCrawler (webcrawler.com) still exists as a metasearch engine. It was one of the first web search engines, launched in 1994. Today it aggregates results from Google and Yahoo rather than operating its own web crawler. It is not related to the general concept of web crawlers (automated bots) discussed in this article.